International Journal of Scientific & Engineering Research, Volume 3, Issue 4, April-2012 1

ISSN 2229-5518

Some New Trigonometric, Hyperbolic and Exponential Measures of Fuzzy Entropy and Fuzzy Directed Divergence.

Jha P., MishraVikas Kumar

Abstract: New Trigonometric, Hyperbolic and Exponential Measures of Fuzzy Entropy and Fuzzy Directed Divergence are obtained and some particular cases have been discussed.

Index Terms: Fuzzy Entropy, Fuzzy Directed Divergence, Measures of Fuzzy Information.

—————————— ——————————

1. Introduction: Uncertainty and fuzziness are the basic nature of human thinking and of many real world objectives. Fuzziness is found in our decision, in our language and in the way we process information. The main use of information is to remove uncertainty and fuzziness. In fact, we measure information supplied by the amount of probabilistic uncertainty removed in an experiment and the measure of uncertainty removed is also called as a measure of information while measure of fuzziness is the measure of vagueness and ambiguity of uncertainties. Shannon [2] used “entropy” to measure uncertain degree of the randomness in a probability distribution. Let X is a discrete random variable with probability distribution  in an experiment. The information contained in this experiment is given by

in an experiment. The information contained in this experiment is given by

Which is well known Shannon entropy.

The concept of entropy has been widely used in

different areas, e.g. communication theory, statistical mechanics, finance, pattern recognition, and neural network etc. Fuzzy set theory developed by Lofti A. Zadeh [8] has found wide applications in many areas of science and technology, e.g. clustering, image processing, decision making etc. because of its capability to model non-statistical imprecision or vague

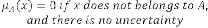

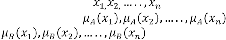

It may be recalled that a fuzzy subset A in U (universe of discourse) is characterized by a membership function which represents the grade of membership of as follows

In fact associates with each  a grade of membership in the set A. When is valued in

a grade of membership in the set A. When is valued in  it is the characteristic function of a crisp (i.e. nonfuzzy) set. Since

it is the characteristic function of a crisp (i.e. nonfuzzy) set. Since  and

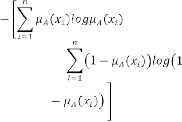

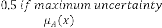

and  gives the same degree of fuzziness, therefore, corresponding to the entropy due to Shannon [2], De Luca and Termini [1] suggested the following measure of fuzzy entropy:

gives the same degree of fuzziness, therefore, corresponding to the entropy due to Shannon [2], De Luca and Termini [1] suggested the following measure of fuzzy entropy:

De Luca and Termini introduced a set of properties and these properties are widely accepted as a criterion for defining any new fuzzy entropy. In fuzzy set theory, the entropy is a measure of fuzziness which expresses the amount of average ambiguity/difficulty in making a decision whether an element

belongs to a set or not. So, a measure of average fuzziness in a fuzzy set should have at least the

i)  when

when

ii) increases as increases from

0 to 0.5.

0.5 to 1.

iv)  i.e.

i.e.  v) is a concave function of

v) is a concave function of

IJSER © 2012

http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 3, Issue 4, April-2012 2

ISSN 2229-5518

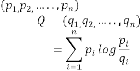

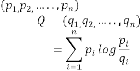

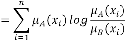

Kullback and Leibler [7] obtained the measure of

directed divergence of probability distribution

from the probability

from the probability

distribution as

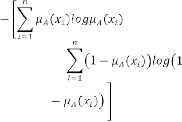

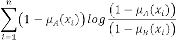

Let A and B be two standard fuzzy sets with same supporting points and with fuzzy

vectors and

. The simplest measure of fuzzy directed divergence as suggested by Bhandari and Pal (1993), is

satisfying the conditions:

i)

ii)

iii)

iv) is a convex function of

later kapur [5],[6] introduced a number of trigonometric hyperbolic and exponential measures of fuzzy entropy and fuzzy directed divergence. In section 2 and 3 we introduce some new trigonometric, hyperbolic and exponential measures of fuzzy entropy and measures of fuzzy directed divergence.

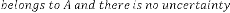

2. New Measures of Fuzzy Entropy

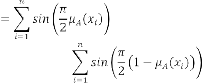

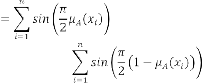

2.1 Trigonometric Measure of Fuzzy

Entropy

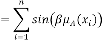

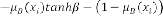

Consider the function  where

where

is a convex function which gives us

is a convex function which gives us

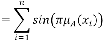

is a new measure of fuzzy entropy. in particular for

is also a new measure of fuzzy entropy.

is a special case of

is a special case of  when

when  .

.

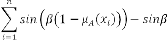

Another special case of arises when  we get

we get

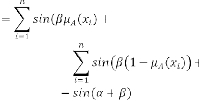

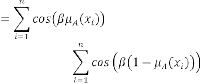

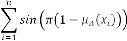

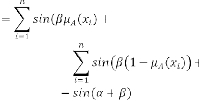

Another trigonometric measure of fuzzy entropy is

reduces to

reduces to  when

when  reduces to when

reduces to when  .

.

reduces to

reduces to  when

when

is a 2-parameter measure of fuzzy entropy. If we put

is a 2-parameter measure of fuzzy entropy. If we put  we get

we get

is a new measure of fuzzy entropy. Clearly above given measures of fuzzy entropy are satisfying all the properties which are given in section 1. So these are valid measures of fuzzy entropy.

IJSER © 2012

http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 3, Issue 4, April-2012 3

ISSN 2229-5518

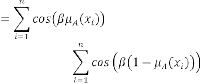

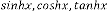

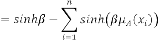

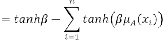

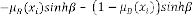

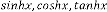

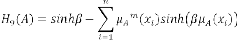

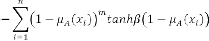

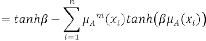

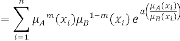

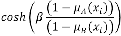

2.1 Hyperbolic Measure of Fuzzy Entropy

where

where

are all convex functions and gives us following valid measures of fuzzy entropy

are all convex functions and gives us following valid measures of fuzzy entropy

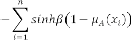

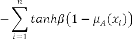

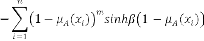

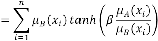

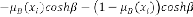

Since  are also convex functions for

are also convex functions for

, we get the following additional

, we get the following additional

measures of fuzzy entropy.

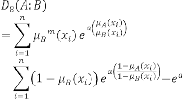

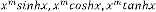

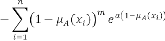

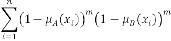

2.2 Exponential Measures of Fuzzy Entropy

Since  is a convex function when

is a convex function when

we get the measure of fuzzy entropy

we get the measure of fuzzy entropy

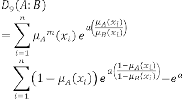

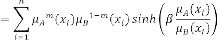

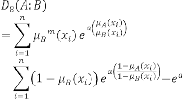

3. New Measures of Fuzzy Directed

Divergence

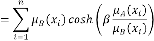

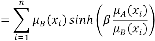

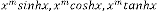

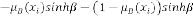

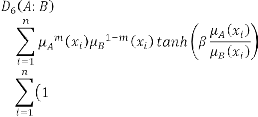

3.1 New Hyperbolic Measures of Fuzzy

Directed Divergence

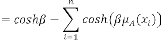

Using the convexity of  we get the following measures of hyperbolic fuzzy

we get the following measures of hyperbolic fuzzy

directed divergence.

IJSER © 2012

http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 3, Issue 4, April-2012 4

ISSN 2229-5518

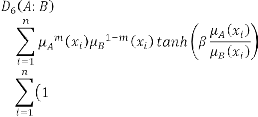

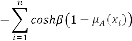

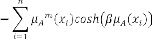

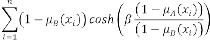

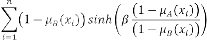

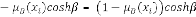

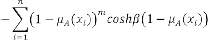

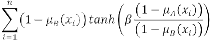

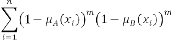

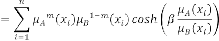

Again since  are also

are also

convex functions for , we get the following more  general hyperbolic measures of fuzzy directed

general hyperbolic measures of fuzzy directed

divergence.

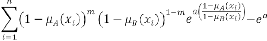

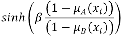

Special case for m=0 and m=1 are

Special case for m=0 and m=1 are

4. Conclusion

(21)

In section 2 and 3 by using the convexity of some trigonometric, hyperbolic and exponential function and satisfying the conditions of fuzzy entropy and fuzzy directed divergence we get some new trigonometric, hyperbolic and exponential measures of fuzzy entropy

and fuzzy directed divergence.

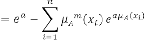

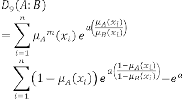

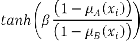

3.2 New Exponential Measures of Fuzzy

Directed Divergence

Since  is a convex function when

is a convex function when

we get the following measures of fuzzy directed divergence

we get the following measures of fuzzy directed divergence

IJSER © 2012

http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 3, Issue 4, April-2012 5

ISSN 2229-5518

Jha P. Department of Mathematics,Govt Chattisgarh P.G. College, Raipur, Chattisgarh(India)

MishraVikas Kumar Department of Mathematics, Rungta College of Engineering and Technology, Raipur, Chattisgarh(India)

5. References

[1] A. De Luca and S. Termini, A Definition of a Non-probabilistic Entropy in the Setting of fuzzy sets theory, Information and Control, 20, 301-312, 1972.

[2] C.E.Shannon (1948). “A Mathematical Theory of

Communication”. Bell. System Tech. Journal Vol. 27, pp. 379-

423,623-659.

[3] D. Bhandari and N. R. Pal, Some new information measures for fuzzy sets, Information Science, 67, 204 - 228, 1993.

[4] F.M.Reza (1948&1949). “An introduction to information theory”. Mc. Graw-Hill, New-York.

[5] J.N.Kapur. Some New Measures of Directed Divergence. Willey Eastern Limited.

[6] J.N.Kapur.Trigonometrical Hyperbolic and Exponential

Measure of Fuzzy Entropy and Fuzzy Directed Divergence. MSTS

[7] S. Kullback and R.A. Leibler, On Information and Sufficiency, Annals of Mathematical Statistics,

22,79 - 86, 1951.

[8] L. A. Zadeh, Fuzzy Sets, Information and Control, 8, 338 - 353,

1965.

IJSER © 2012

http://www.ijser.org