International Journal of Scientific & Engineering Research Volume 2, Issue 7, July-2011 1

ISSN 2229-5518

Facial Expression Analysis: Towards

Optimizing Performance and Accuracy

Sujay Agarkar, Ayesha Butalia, Romeyo D’Souza, Shruti Jalali, Gunjan Padia

Abstract— Facial expressions play an important role in interpersonal relations. This is because humans demonstrate and convey a lot of evident information visually rather than verbally. Although humans recognize facial expressions virtually without effort or delay, reliable expression recognition by machine remains a challenge as of today. To automate recognition of facial expressions, machines must be taught to understand facial gestures. In sustenance to this idea, we consider a facial expression to consist of deformations of facial components and their spatial relations, along with changes in the pigmentation of the same. This paper envisages interpretation of relative deviations of facial components, leading to expression recognition of subjects in images. Many of the potential applications utilizing automated facial expression analysis will necessitate speedy performance. We propose approaches to optimize the performance and accuracy of such a system by introducing ways to personalize and calibrate the system. We also discuss potential problems that may arise to hinder the accuracy, and suggest strategies to deal with them.

Index Terms—Facial Gestures, Action Units, Neuro-Fuzzy Networks, Fiducial Points, Missing Values, Calibration.

—————————— • ——————————

1 INTRODUCTION

ACIAL expression analysis and recognition is a basic process performed by every human every day. Each one of us analyses the expressions of the individuals

we interact with, to understand best their response to us. Even an infant can tell his/her mothers smile from her frown. This is one of the very fundamental communica- tion mechanisms known to man.

In the next step to Human-Computer interaction, we endeavor to empower the computer with this ability — to be able to discern the emotions depicted on a person’s visage. This seemingly effortless task for us needs to be broken down into several parts for a computer to per- form. For this purpose, we consider a facial expression to represent fundamentally, a deformation of the original features of the face.

On a day-to-day basis, humans commonly recognize emotions by characteristic features displayed as part of a facial expression. For instance, happiness is undeniably associated with a smile, or an upward movement of the corners of the lips. This could be accompanied by upward movement of the cheeks and wrinkles directed outward from the outer corners of the eyes. Similarly, other emo- tions are characterized by other deformations typical to the particular expression.

————————————————

• Sujay Agarkar is currently pursuing bachelors degree program in comput- ers engineering in Pune University, India. E-mail: sragarkar@gmail.com

• Ayesha Butalia is currently pursuing PhD degree program in computer science in India. E-mail: sragarkar@gmail.com

• Romeyo D’Souza is currently pursuing bachelors degree program in com- puters engineering in Pune University, India. E-mail: dsou- za.romeyo@gmail.com

• Shruti Jalali is currently pursuing bachelors degree program in computers

engineering in Pune University, India. E-mail: shrutijalali@gmail.com

• Gunjan Padia is currently pursuing bachelors degree program in computers

engineering in Pune University, India. E-mail: gunjan412@gmail.com

More often than not, emotions are depicted by subtle changes in some facial elements rather than their obvious contortion to represent its typical expression as is defined. In order to detect these slight variations induced, it is im- portant to track fine-grained changes in the facial fea- tures.

The general trend of comprehending observable com- ponents of facial gestures utilizes the FACS, which is also a commonly used psychological approach. This system, as described by Ekman [12], interprets facial information in terms of Action Units, which isolate localized changes in features such as eyes, lips, eyebrows and cheeks.

The actual process is akin to a divide-and-conquer ap- proach, a step-by-step isolation of facial features, and then recombination of the interpretations of the same in order to finally arrive at a conclusion about the emotion depicted.

2 RELATED WORK

Visually conveyed information is probably the most im- portant communication mechanism used for centuries, and even today. As mentioned by Mehrabian [8], upto

55% of the communicative message is understood through facial expressions. This understanding has sparked an enormous amount of speculation in the field of facial gestural analysis over the past couple of decades. Many different techniques and approaches have been proposed and implemented in order to simplify the way computers comprehend and interact with their users.

IJSER © 2011 http://www.ijser.org

International Journal of Scientific & Engineering Research Volume 2, Issue 7, July-2011 2

ISSN 2229-5518

The need for faster and more intuitive Human- Computer Interfaces is ever increasing with many new innovations coming to the forefront. [1]

Azcarate et al. [11] used the concept of Motion Units (MUs) as input to a set of classifiers in their solution to the facial emotion recognition problem. Their concept of MUs is similar to “Action Units” as described by Ekman. [12]. Chibelushi and Bourel [3] propose the use of GMM (Gaussian Mixture Model) for pre-processing and HMM (Hidden Markov Model) with Neural Networks for AU identification.

Lajevardi and Hussain [15] suggest the idea of dynam- ically selecting a suitable subset of Gabor filters from the available 20 (called Adaptive Filter Selection), depending on the kind of noise present. Gabor Filters have also been used by Patil et. Al [16]. Lucey et. al [17] have devised a method to detect expressions invariant of registration using Active Appearance Models (AAM). Along with multi-class SVMs, they have used this method to identify expressions that are more generalized and independent of image processing constraints such as pose and illumina- tion. This technique has been used by Borsboom et. al [7] feature extraction, whereas they have used Haar-like fea- tures to perform face detection.

Noteworthy is the work of Theo Gevers et. al [21] in this field. Their facial expression recognition approach enhances the AAMs mentioned above, as well as MUs. Tree-augmented Bayesian Networks (TAN), Native Bayes (NB) Classifiers and Stochastic Structure Search (SSS) al- gorithms are used to effectively classify motion detected in the facial structure dynamically.

Similar to the approach adopted in our system, P. Li et. al have utilized fiducial points to measure feature devia- tions and OpenCV detectors for face and feature detec- tion. Moreover, their geometric face model orientation is akin to our approach of polar transformations for processing faces rotated within the same plane (i.e. for tilted heads).

3 SYSTEM ARCHITECTURE

The task of automatic facial expression recognition from face image sequences is divided into the following sub- problem areas: detecting prominent facial features such as eyes and mouth, representing subtle changes in facial expression as a set of suitable midlevel feature parame- ters, and interpreting this data in terms of facial gestures.

As described by Chibelushi and Bourel [3], facial ex- pression recognition shares a generic structure similar to that of facial recognition. Whereas facial recognition re- quires that the face is independent of deformations in order to identify the individual correctly, facial expres- sion recognition measures deformations in the facial fea- tures to classify them. Although the face and feature de- tection stages are shared by these techniques, their even- tual aim is different.

Fig. 1. Block Diagram of the Facial Recognition System

Face detection is widely applied through the HSV segmentation technique. This step narrows the region of interest of the image down to the facial region¸ eliminat- ing unnecessary information for faster processing.

Analyzing this region (facial) helps in locating the pre- dominant seven regions of interest (ROIs), viz. two eye- brows, two eyes, nose, mouth and chin. Each of these regions is then filtered to obtain the desired facial fea- tures. Following up this step, to spatially sample the con- tour of a certain permanent facial feature, one or more facial-feature detectors are applied to the pertinent ROI. For example, the contours of the eyes are localized in the ROIs of the eyes by using a single detector representing an adapted version of a hierarchical-perception feature location method [13]. We have performed feature extrac- tion by a method that extracts features based on Haar-like features, and classifies them using a tree-like decision structure.

The contours of the facial features, generated by the fa- cial feature detection method, are utilized for further analysis of shown facial expressions. Similar to the ap- proach taken by Pantic and Rothkrantz [2], we carry out feature points’ extraction under the assumption that the face images are non-occluded and in frontal view. We extract 22 fiducial points, which constitute the action units, once grouped by the ROI they belong to. The last stage employs the FACS, which is still the most widely used method to classify facial deformations.

The output of the above stage is a set of detected action

units. Each emotion is the equivalent of a unique set of

action units, represented as rules in first-order logic.

These rules are utilized to interpret the most probable

emotion depicted by the subject.

The rules can be described in first-order predicate logic

or propositional logic in the form:

Gesture (joy):-

IJSER © 2011 http://www.ijser.org

International Journal of Scientific & Engineering Research Volume 2, Issue 7, July-2011 3

ISSN 2229-5518

lip_corners (raised) ^ outer_eyes (narrowed) ^ cheeks

(raised).

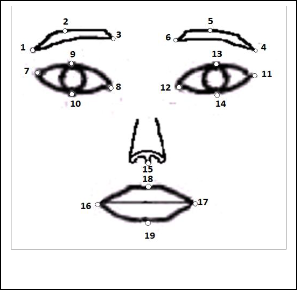

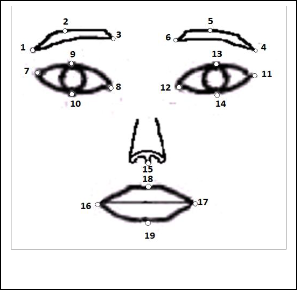

For instance, the following diagram shows the fiducial points marked on the human face. For a particular emo- tion, a combination of points are affected and monitored. For instance, for joy or happiness, the following action units are considered:

• Raising of corners of lips indicated by point 16 and point 17

• Narrowing of outer corners of eyes shown by point 9 and point 10, point 13, point 14.

• Raising of cheeks. It is difficult to quantify this action unit as points on the cheeks are difficult to pinpoint due to lack of sharp edges that can be detected or used as ref- erence in that region.

facial expressions, fuzzy logic comes into picture when a certain degree of deviation is to be made permissible from the expected set of values for a particular facial expres- sion.

This is necessary because each person’s facial muscles contort in slightly different ways while showing similar emotions. Thus the deviated features may have slightly different coordinates, yet still the algorithm should work irrespective of these minor differences. Fuzzy logic is used to increase the success rate of the process of recogni- tion of facial expressions across different people.

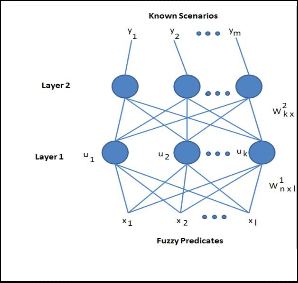

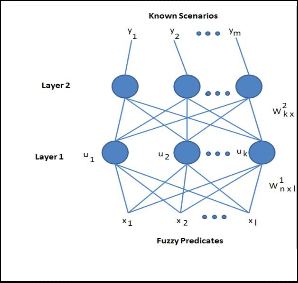

A neuro-fuzzy architecture incorporates both the tech-

Fig. 2. Facial Points used for the distances definition

Both face and feature localization are challenging be- cause of the face/features manifold owing to the high inter-personal variability (e.g. gender and race), the intra- personal changes (e.g. pose, expression, pres- ence/absence of glasses, beard, mustaches), and the ac- quisition conditions (e.g. illumination and image resolu- tion).

3.1 Artificial Neural Networks and Fuzzy Sets

We observed that other applications used an ANN [13] with one hidden layer [1] to implement recognition of gestures for a single facial feature (lips) alone. We apply one such ANN for each independent feature or Action Unit that will be interpreted for emotion analysis. We intend to utilize the concept of Neural Networks to assist the system to learn (in a semi-supervised manner) how to customize its recognition of expressions for different spe- cialized cases or environments.

Fuzzy logic [22] (and fuzzy sets) is a form of multi-valued logic derived from fuzzy set theory to deal with reasoning that is approximate rather than accurate. Pertaining to

Fig. 3. The Two-Layer Neurofuzzy Architecture

niques, merging known values and rules with variable conditions, as shown in Fig. 3. Chatterjee and Shi [18] have also utilized the neuro-fuzzy concept to classify ex- pressions, as well as to assign weights to facial features depending on their importance to the emotion detection process.

4 POTENTIAL ISSUES

4.1 Geographical Versatility

One of the fundamental problems faced by software that accepts images of the user as an input is that it needs to account for the geographical variations bound to occur in it. Though people coming from one geographical area share certain physiological similarities, they tend to be distinct from those who belong to another region. How- ever, these physical differences will create a lot of incon- sistencies in the input obtained.

To tackle this issue, the software must employ a tech- nique that helps increase the scale of deviations occur- ring, so that divergence to a certain degree can be rounded off and the corresponding feature recognized. Support Vector Machines [10] help the software do this.

Fundamentally, a set of SVMs will be used to specify and incorporate rules for each facial feature and related

IJSER © 2011 http://www.ijser.org

International Journal of Scientific & Engineering Research Volume 2, Issue 7, July-2011 4

ISSN 2229-5518

possible gestures. The more the rules for a particular fea- ture, the greater will be the degree of accuracy for even the smallest of micro-expressions and for the greater reso- lution images.

Originally designed for binary classification, there are currently two types of approaches [10] for multi-class SVMs. The first approach is to construct and combine several binary classifiers (also called “one-per-class” me- thod) while the other is to consider all data in one optimi- zation formulation named one-one way. Also, SVMs can be used to form slightly customized rules for drastically different geographical populations. Once the nativity of the subject is identified, the appropriate multi-class SVM can be used for further analysis and processing.

Thus, this method uses SVMs to take care of the differ- ences and inconsistencies due to the environmental varia- tions.

4.2 Exploiting Facial Symmetry and Structure

A very simple and consistent feature of any human face is its basic structure. Eyes, eyebrows and forehead are al- ways located in the uppermost region of the face, whereas the lips and chin belong in the lower region. The cheeks and nose lie in the central region.

When processing a detected facial region to extract fa- cial features such as the eyes, nose and lips, it is useful to narrow the region of interest for each feature to the ap- propriate area of the facial region. For instance, when extracting the eyes, the algorithm will only search the upper half of the face. The portion of the facial region to be searched for each feature can be carefully calculated empirically after observing many faces. By utilizing this approach in our system, valuable processing time has been saved by reducing the size of the image to be processed for each feature.

4.3 Personalization and Calibration

The process of emotion detection can be further enhanced to train the system using semi-supervised learning. Since past data about a particular subject’s emotive expression can be stored and be made available to the system later, it may serve to improve the machines ‘understanding’ and be used as a base for inferring more about a person’s emotional state from a more experienced perspective.

One approach to supervised learning is on inception of the system, for AU and gesture mapping. Here, the user will give feedback to the system with respect to his/her images flashed on the screen in the form of gesture name. This calibration process may be allowed approximately a minute or two to complete. In this way the user is effec- tively tutoring the system to recognize basic gestures so that it can build up on this knowledge in a semi- supervised manner later on. This feedback will be stored in a database along with related entries for the parame- ters extracted from the region of interest (ROI) of the im- age displayed. The same feedback is also fed into the neu- ro-fuzzy network structure which performs the transla-

tion of the input parameters to obtain the eventual result. It is used to shift the baseline for judging the input para- meters and classifying them as part of the processing in- volved in the system. Now, for further input received, the neural network behaves in tandem with its new, persona- lized rule set.

The user can also request for training examples to be gathered at discrete time intervals and provide a label for each. This can be combined with the displacements out- put by the feature extraction phase and added as a new example to the training set. Another way to increase the personalized information is to maintain an active history of the user, similar to a session. This data is used further on to implement a context-based understanding of the user, and predict the likelihoods of emotions that may be felt in the near future.

Calibration and Personalization would further increase the recognition performance and allow a tradeoff be- tween generality and precision to be made. By applying these methods, the system in focus will be able to recog- nize the user’s facial gestures with very high accuracy; this approach is highly suitable for developing a persona- lized, user-specific application.

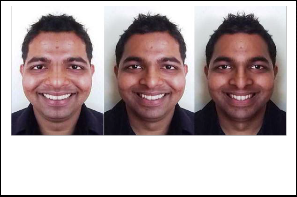

4.4 Dealing with Missing Values

The uncontrolled conditions of the real world and the numerous methods that can be employed to obtain the information for processing can cause extensive occlusions and create a vast scope for missing values in the input. Back in the history of linear PCA, the problem of missing values has long been considered as an essential issue and investigated in depth. [24][25] Thus in this paper the problem of missing values is tackled by taking into con- sideration the facial symmetry of the subject.

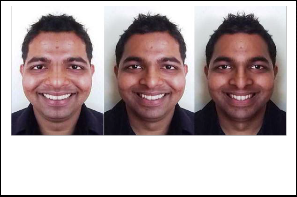

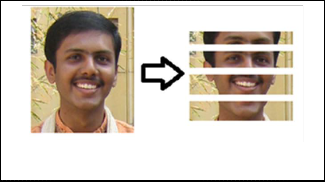

Although human faces are not exactly symmetrical for most expressions, we create perfectly symmetrical faces using the left or right (depending on which one is clearer) side of the face. Let’s call it the reference image. The crea- tion of the Reference image is shown below using the left and right sides of the face respectively.

Now the original image is first scanned for the oc- cluded or blank areas in the face, that constitute the set of missing values. After all the locations of blockages have

Fig. 4. Left: original face, Centre: Symmetrical face created using the right side, Right: Symmetrical face created using the left side.

IJSER © 2011 http://www.ijser.org

International Journal of Scientific & Engineering Research Volume 2, Issue 7, July-2011 5

ISSN 2229-5518

been identified, the Reference image is super-imposed on this scanned image. During the superimposition the facial symmetry is therefore utilized to complete the input val- ues wherever necessary, and the missing values taken care of.

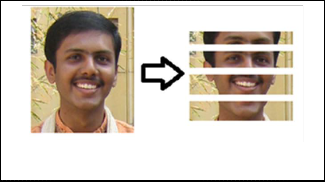

4.5 Overcoming Time Constraints

The main challenge in analyzing the face, for the expres- sion being portrayed, is the amount of processing time required. This becomes a bottleneck in real time scenarios. One way of overcoming this time constraints is dividing the image into various regions of interest and analyzing each in parallel independently. For instance, the face can be divided into regions of the mouth; chin, eyes, eye- brows etc. and the parallel analysis of these can be com- bined to get the final result. This approach will guarantee a gain in the throughput of the system.

TABLE 1

PERSON-DEPENDENT CONFUSION MATRIX FOR TRAINING AND

TEST DATA SUPPLIED BY AN EXPERT USER

An Expert User in this context is a User of the Software who understands the relation between Action Units and Facial Gestures. Such a user is also aware of what Action Units are expected to form a particular expression the system is capable of detecting.

phase where the system tunes to the facial structure of the current user.

Fig. 5. Delegation of Image Components to Parallel Processors.

5 RESULTS

In this section we present the resulting effectiveness of our systems facial expression recognition capability as well as subsequent emotion classification. The Confusion Matrix below shows that for a dataset of images depicting a particular emotion, how many were identified correctly, and how many were confused with other emotions. The dataset comprised images taken of an expert user, having knowledge of what a typical emotion is expected to show as per universal norms described by Ekman [12]. Our dataset comprised 73 images of regionally different faces depicting Joy, Sorrow, Surprise and Neutral emotive states.

As is evident from Table 1, our system shows greater accuracy for Joy with an 81.8% accuracy rate. The average accuracy of our system is 70.5%.

These results can be further improved by personaliz- ing the system as discussed in the previous section. A personalized system is tuned to its user’s specific facial actions, and hence, after a calibration period, can be ex- pected to give an average accuracy of up to 90%.

Furthermore, personalization can also be generalized to a regional population, for example, North America, East Asia, India, Africa, etc. Models for each regional area are prepared, and can be brought into the calibration

6 FUTURE SCOPE AND APPLICATIONS

As human facial expression recognition is a very elemen- tary process, it is useful to evaluate the mood or emotion- al state of a subject under observation. As such, tremend- ous potential lies untapped in this domain. The basic idea of a machine being able to comprehend the human emo- tive state can be put to use in innumerable scenarios, a few of which we have mentioned here:

� The ability to detect and track a user’s state of mind has the potential to allow a computing sys- tem to offer relevant information when a user

needs help – not just when the user requests help. For instance, inducing a change in the Room Ambience by judging the mood of the per- son entering it.

� Help people in emotion-related research to im-

prove the processing of emotion data.

� Applications in surveillance and security. When

a suspect is lying, Video Footage can capture Mi-

cro-expressions missed by a trained eye. This

system can help detect signs of fear, guilt or even

aggression in the suspect and thus aid in the final

decision of whether the he is innocent or guilty. In this regard, lie detection amongst criminal suspects during interrogation is also a useful as- pect in which this system can form a base.

o Patient Monitoring in hospitals to judge the effectiveness of prescribed drugs is one application to the Health Sector. In addition to this, diagnosis of diseases that alter facial features and psychoana- lysis of patient mental state are further possibilities.

� Clever Marketing is feasible using emotional

knowledge of a patron and can be done to suit

IJSER © 2011 http://www.ijser.org

International Journal of Scientific & Engineering Research Volume 2, Issue 7, July-2011 6

ISSN 2229-5518

what a patron might be in need of based on his/her state of mind at any instant.

� Detecting symptoms such as drowsiness, fatigue, or even inebriation can help in the process of Driver Monitoring. Such an application can play an integral role in reducing road mishaps to a

great extent.

Also noteworthy of mention is the advantage of read- ing emotions through facial cues, as our system does, over reading them through study of audio data of human voices. Not only does the probability of noise affecting and distorting the input reduce for our system, but also there are fewer ways to disguise or misread from visual information as opposed to audio data, as is also stated by Busso et. al [23] . Facial expressions would form a vital part of a multimodal system for emotion analysis as well. The reliability of emotion detection can be greatly en- hanced if the two methods can be combined.

7 CONCLUSION

Gaining insight on what a person may be feeling is very valuable for many reasons. Numerous useful products such as lie detectors and Patient Monitoring Systems can be built and enhanced by the use of a system such as this one. The future scope of this field is visualized to be prac- tically limitless, with more futuristic applications visible on the horizon.

Our facial expression recognition system, utilizing neuro-fuzzy architecture is 70.5% accurate, which is ap- proximately the level of accuracy expected from a sup- port vector machine approach as well. Every system has its limitations. The accuracy of such a system can be in- creased manifold by introducing a personalized ap- proach, as discussed in this paper. Although this particu- lar implementation of facial expression recognition may perform less than exactly accurate when compared with an intuitive user, it is envisioned to contribute significant- ly to the field, upon which similar work can be furthered and enhanced. Our aim in forming such a system is to form a standard protocol that may be used as a compo- nent in many of the applications that may benefit from an emotion-based HCI.

Empowering computers in this way has the potential of changing the very way a machine “thinks”. It gives them the ability to understand humans as ‘feelers’ rather than ‘thinkers’. This in mind, this system can even be im- plemented in the context of Artificial Intelligence. As part of the relentless efforts of many to create intelligent ma- chines, facial expressions and emotions have and always will play a vital role.

ACKNOWLEDGMENT

The authors wish to thank the staff of Maharashtra Insti- tute of Technology College of Engineering, Pune for their support and guidance, as well as their colleagues for their

valuable feedback and thoughtful suggestions.

REFERENCES

[1] Piotr Dalka, Andrzej Czyzewski, “Human-Computer Interface Based on Visual Lip Movement and Gesture Recognition”, International Jour- nal of Computer Science and Applications, ©Technomathematics Re- search Foundation Vol. 7 No. 3, pp. 124 - 139, 2010

[2] M. Pantic, L.J.M. Rothkrantz, “Facial Gesture Recognition in face image

sequences: A study on facial gestures typical for speech articulation”

Delft University of Technology.

[3] Claude C. Chibelushi, Fabrice Bourel, “Facial Expression Recognition:

A Brief Overview”, 1-5 ©2002.

[4] Aysegul Gunduz, Hamid Krim “Facial Feature Extraction Using Topo-

logical Methods” © IEEE, 2003

[5] Kyungnam Kim, “Face Recognition Using Principal Component Anal- ysis”.

[6] Abdallah S. Abdallah, A. Lynn Abbott, and Mohamad Abou El-Nasr, “A New Face Detection Technique using 2D DCT and Self Organizing Feature Map”, World Academy of Science, Engineering and Technolo- gy 27 2007.

[7] Sander Borsboom, Sanne Korzec, Nicholas Piel, “Improvements on the

Human Computer Interaction Software: Emotion”, Methodology, 2007,

1-8.

[8] A. Mehrabian, Communication without Words, Psychology Today 2 (4) (1968) 53-56.

[9] M. Pantic, L.J.M. Rothkrantz, “Expert System for Automatic for Auto- matic Analysis of Facial Expressions” Image and Vision Computing 18 (2000) 881-905.

[10] Li Chaoyang, Liu Fang, Xie Yinxiang, “Face Recognition using Self- Organizing Feature Maps and Support Vector Machines”, Proceedings of the Fifth ICCIMA, 2003.

[11] Aitor Azcarate, Felix Hageloh, Koen van de Sande, Roberto Valenti,

“Automatic Facial Emotion Recognition” Univerity of Amsterdam, 1-

10, June 2005

[12] P. Ekman “Strong evidence for universals in Facial Expressions” Psy-

chol. Bull., 115(2): 268-287, 1994.

[13] A. Raouzaiou, N. Tsapatsoulis, V. Tzouvaras, G. Stamou and S. Kollias,

“A Hybrid Intelligence System for Facial Expression Recognition” EU- NITE 2002 482-490.

[14] Yanxi Liu, Karen L. Schmidt, Jeffrey F. Cohn, Sinjini Mitra, “Facial

Asymmetry Quantification for Expression Invariant Human Identifica- tion”.

[15] Seyed Mehdi Lajevardi, Zahir M. Hussain “A Novel Gabor Filter Selec- tion Based on Spectral Difference and Minimum Error Rate for Facial Expression Recognition” 2010 Digital Image Computing: Techniques and Applications, 137-140.

[16] Ketki K. Patil, S.D. Giripunje, Preeti R. Bajaj, “Facial Expression Recogni-

tion and Head Tracking in Video Using Gabor Filtering”, Third Interna- tional Conference on Emerging Trends in Engineering and Technology,

152-157.

[17] Patrick Lucey, Simon Lucey, Jeffrey Cohn, “Registration Invariant

Representations for Expression Detection” 2010 Digital Image Compu- ting: Techniques and Applications, 255-261.

[18] Suvam Chatterjee, Hao Shi, “A Novel Neuro-Fuzzy Approach to Hu-

man Emotion Determination” 2010 Digital Image Computing: Tech- niques and Applications, 282-287.

[19] P. Li, S. L. Phung, A. Bouzerdoum, F. H. C. Tivive, “Automatic Recog- nition of Smiling and Neutral Facial Expressions” 2010 Digital Image Computing: Techniques and Applications, 581-586.

[20] Irfan A. Essa, “Coding, Analysis, Interpretation and Recognition of Facial Expressions” IEEE Transactions on Pattern Recognition and Ma- chine Intelligence, July 1997, 757-763.

[21] Roberto Valenti, Nicu Sebe, Theo Gevers, “Facial Expression Recogni-

tion: A Fully Integrated Approach” ICIAPW '07 Proceedings of the 14th

IJSER © 2011 http://www.ijser.org

International Journal of Scientific & Engineering Research Volume 2, Issue 7, July-2011 7

ISSN 2229-5518

International Conference of Image Analysis and Processing, 2007, 125-

130.

[22] L. A. Zadeh, “Fuzzy Sets” Information and Control 8, 1965, 338-353.

[23] Carlos Busso, Zhigang Deng, Serdar Yildirim, Murtaza Bulut, Chul Min

Lee, Abe Kazemzadeh, Sungbok Lee, Ulrich Neumann, Shrikanth Na- rayanan, “Analysis of Emotion Recognition using Facial Expressions, Speech and Multimodal Information”, ACM 2004.

[24] N.F Troje, H. H. Billthoff, “How is bilateral symmetry of human faces used for recognition of novel views”, Vision Research 1998, 79-89

[25] R Thornhill, S. W. Gangestad, “Facial Attractiveness”, Trans. In Cogni- tive Sciences, December 1999.

IJSER © 2011 http://www.ijser.org