International Journal of Scientific & Engineering Research, Volume 4, Issue 6, June-2013 121

ISSN 2229-5518

Efficient Content Based Image Retrieval Using Color and

Texture

Mr. Milind V. Lande | Prof .Praveen bhanodiya | Mr. Pritesh Jain |

M.tech Student in PCST,Indore | HOD In CSE DepptPCST,Indore. | Lect. in CSE Deppt,PCST,Indore |

Mail id-milind.jdiet@gmail.com Mail id- pcst.praveen@gmail.com Mail id- Arihant.pritesh@gmail.com

Abstract :

Image classification is perhaps the most important part of digital image analysis. Retrieval pattern- based learning is the most effective that aim to establish the relationship between the current and previous query sessions by analyzing image retrieval patterns. We propose a new feedback based and content based image retrieval system. Content based image retrieval from large resources has become an area of wide interest nowadays in many applications. In this paper we present content-based image retrieval system that uses color and texture as visual features to describe the content of an image region. Our contribution are we use Gabor filters to extract texture features from arbitrary shaped regions separated from an image after segmentation to increase the system effectiveness. In our simulation analysis, we provide a comparison between retrieval results based on features extracted from color the whole image, and features extracted from Texture some image regions. That approach is more effective and efficient way for image retrieval.

Keywords: Content Based Image Retrieval (CBIR), Global BasedFeatures, Texture, Gabor Filters,

I .INTRODUCTION:

Advances in computer and network technologies coupled with relatively cheap high volume data storage devices have brought tremendous growth in the amount of digital images. The digit contents are being generated with an exponential speed. Businesses, the media, government agencies and even individuals all need to organize their images somehow. As the amount of collections of digital images increases, the problem finding a desired image in the web becomes a hard task. There is a need to develop an efficient method to retrieve digital images.

There are two approaches to image retrieval: Text-Based approach and Content- Based

approach Today, the most common way of doing this is by textual descriptions and categorizing of images. This approach has some obvious shortcomings. Different people might categorize or describe the same image differently, leading to problems retrieving it again. It is also time consuming when dealing with very large databases. Content based image retrieval (CBIR) is a way to get around these problems. CBIR systems search collection of images based on features that can be extracted from the image files themselves without manual descriptive .In past decades many CBIR systems have been developed, the common ground for them is to extract a desired image. Comparing two images and

deciding if they are similar or not is a

IJSER © 2013 http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 4, Issue 6, June-2013 122

ISSN 2229-5518

relatively easy thing to do for a human. Getting a computer to do the same thing effectively is however a different matter. Many different approaches to CBIR have been tried and many of these have one thing in common, the use of color histograms.

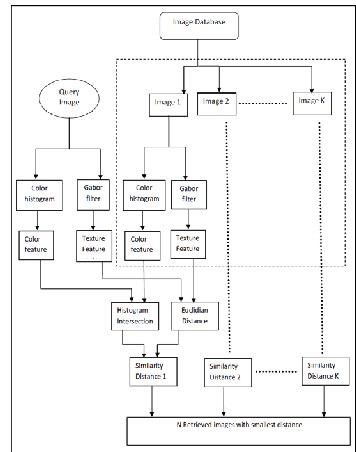

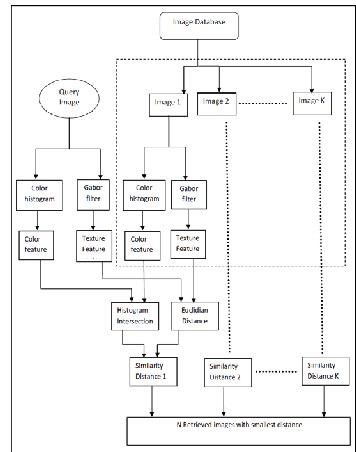

Content-based retrieval uses the contents of images to represent and access the images. A typical content-based retrieval system is divided into off-line feature extraction and online image retrieval. A conceptual framework for content-based image retrieval is illustrated in Figure1.

In off-line stage, the system automatically extracts visual attributes (color, shape, texture, and spatial information) of each image in the database based on its pixel values and stores them in a different database within the system called a feature database. The feature data (also known as image signature) for each of the visual attributes of each image is very much smaller in size compared to the image data, thus the feature database contains an abstraction (compact form) of the images in the image database.

Content-Based Image Retrieval

II. LITERATURE SURVEY

In 2004 , Issam El-Naqa, Yongyi Yang , Nikolas P. Galatsanos , Robert M. Nishikawa , and Miles N. Wernick ,in his paper “A Similarity Learning Approach to Content- Based Image Retrieval: Application to Digital Mammography ” published in Ieee Transactions On Medical Imaging, Vol. 23, No. 10, October 2004 1233 proposed a learning machine-based framework for modeling human perceptual similarity for content-based image retrieval. In 2006 ,Zhe- Ming Lu, Su-Zhi Li and Hans Burkhardt , in his paper “ A Content-Based Image Retrieval Scheme In JPEG Compressed Domain ” published in International Journal of Innovative Computing, Informationand Control ICIC International °c 2006 ISSN

1349-4198 Volume 2, Number 4 proposed an image retrieval scheme in the DCT domain that is suitable for retrieval of color JPEG images of different sizes. In 2007 , Ryszard S. Chora´s , in his paper “Image Feature Extraction Techniquesand Their Applications for CBIR and Biometrics Systems” identifies the problems existing in CBIR and Biometrics systems - describing image content and image feature extraction. He has described a possible approach to mapping image content onto low- level features. This paper investigated the use of a number of different color, texture and shape features for image retrieval in CBIR and Biometrics systems.

digital images in large databases.

IJSER © 2013 http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 4, Issue 6, June-2013 123

ISSN 2229-5518

Content based means that thesearch makes use of the contents of the images themselves, rather than relying on human-inputmetadata such as captions or keywords. A content-based image retrieval system (CBIR) is a pieceof software that implements CBIR. In CBIR, each image that is stored in the database has itsfeatures extracted and compared to the features of the query image. It involves two steps.

A. Feature Extraction

The first step in this process is to extract the image features to a distinguishable extent. In this paper ,global features are extracted to make system system more efficient. In this section, we introduce the image features used by our methods for images description. We classify the various features as follows-

Texture Features

Color Features

The used color descriptor is composed by the following attributes

Colors expectancy: Ei= 1/N ∑N Pij (1)

Colors variance: ∂i=(1/N ∑N (Pi j Ei ) 2)1/2 (2)

Skewness:  i=(1/N ∑N (Pi j Ei3)1/3 (3)

i=(1/N ∑N (Pi j Ei3)1/3 (3)

Where Pij is the (i; j) pixelcolor, N is the total number of pixels in the image. These values allow to estimate the average color, the dispersion of color values from the average and the symmetry of their distribution on the whole image.

B. Matching

The second step involves matching these features to yield a result that is visually similar. Basic idea behind CBIR is that, when building an image database, feature vectors from images (the features can be color, shape, texture, region or spatial features, features in some compressed domain, etc.) are to be extracted and then store the vectors in another database for future use.

Fig.2.Block Diagram of CBIR System

When given a query image its feature vectors are computed. If the distance between feature vectors of the query image and image in the database is small enough, the corresponding image in the database is to be considered as a match to the query. The search is usually based on similarity rather than on exact match and the retrieval results are then ranked accordingly to a similarity index.

IV. COLOR SPACE

A color space is defined as a model for representing color in terms of intensity values. Typically, a color space defines a one-to-four dimensional space. A color component or a color channel is one of the dimensions. A color dimensional space(i.e. one dimension per pixel) represents the gray scale space.

A. The RGB color model

IJSER © 2013 http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 4, Issue 6, June-2013 124

ISSN 2229-5518

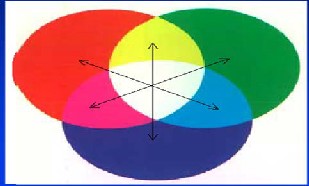

The RGB model uses three primary colors, red, green and blue, in an additive fashion to be able to reproduce other colors. As this is the basis of most computer displays today, this model has the advantage of being easy to extract. In a true-color image each pixel will have has a red, green and blue value ranging from 0 to 255 giving a total of 16777216 different colors. One disadvantage with the RGB model is its behavior when the illumination in an image changes. The distribution of rgb-values will change proportionally with the illumination, thus giving a very different histogram.

Fig.3.Additive mixing of red, green and blue

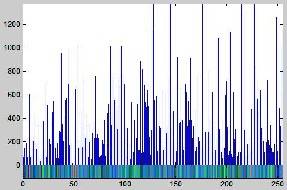

V. HISTOGRAM BASED IMAGE SEARCH

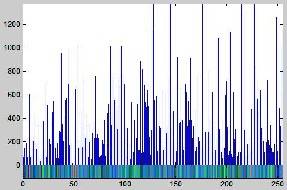

The color histogram for an image is constructed by counting the number of pixels of each color. In these studies the development of the extraction algorithms follow a similar progression (1) selection of a color space (2) quantization of the color space (3)computation of histograms.

A. Color Histogram

The approach more frequently adopted for

CBIR systems is based on the conventional

color histogram (CCH), which contains occurrences of each color obtained counting all image pixels having that color. Each pixel is associated to a specific histogram bin only on the basis of its own color, and color similarity across different bins or color dissimilarity in the same bin are not taken into account. Since any pixel in the image can be described by three components in a certain color space(for instance, red, green and blue components in RGB space or hue, saturation and value in HSV space), a histogram, i.e., the distribution of the number of pixels for each quantized bin, can be defined for each component. By default the maximum number of bins one can obtain using the histogram function in Mat Lab is 256. The conventional color histogram (CCH) of an image indicates the frequency of occurrence of every color in an image. The appealing aspect of the CCH is its simplicity and ease of computation.

Fig.4. Sample Image

IJSER © 2013 http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 4, Issue 6, June-2013 125

ISSN 2229-5518

Fig.6 B) Complex Texture Image

Fig.5. Corresponding Color Histogram

B. Texture Images

In the field of computer vision and image processing, there is no clear-cut definition of texture. This is because available texture definitions are based on texture analysis methods and the features extracted from the image. However, texture can be thought of as repeated patterns of pixels over a spatial domain, of which the addition of noise to the patterns and their repetition frequencies results in textures that can appear to be random and unstructured. Texture properties are the visual patterns in an image that have properties of homogeneity that do not result from the presence of only a single color or intensity. The different texture properties as perceived by the human eye are, for example, regularity, directionality, smoothness, and coarseness, see Figure 6(a).

Fig.6.A) Sample Texture Image

In real world scenes, texture perception can be far more complicated. The various brightness intensities give rise to a blend of the different human perception of texture as shown in Figure6(b). Image textures have useful applications in image processing and computer vision. They include: recognition of image regions using texture properties, known as texture classification, recognition of texture boundaries using texture properties, known as texture segmentation, texture synthesis, and generation of texture images from known texture models.

VI. Global Feature Based CBIR System

Design

In this chapter, we introduce the first part of our proposed CBIR system; the global features based image retrieval (GBIR). This system defines the similarity between contents of two images based on global features (i.e., features extracted from the whole image). Texture is one of the crucial primitives in human vision and texture features have been used to identify contents of images. Moreover, texture can be used to describe contents of images, such as clouds, bricks, hair, etc. Both identifying and describing contents of an image are strengthened when texture is integrated with color, hence the details of the important features of image objects for human vision can be provided.

IJSER © 2013 http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 4, Issue 6, June-2013 126

ISSN 2229-5518

In this system, Gabor filters a tool for texture features extraction that has been proved to be very effective in describing visual contents of an image via multi resolution analysis as mentioned is used. In addition, color histogram technique is applied for color feature representation combined with histogram intersection technique for color similarity measure. A similarity distance between two images is defined based on color and texture features to decide which images in the image database are similar to the query and should be retrieved to the user. The details of the proposed system are described in the following sections.

A. Texture Feature Extraction

Texture feature is computed using Gabor wavelets. Gabor function is chosen as a tool for texture feature extraction because of its widely acclaimed efficiency in texture feature extraction. Man junath and Ma [15] recommended Gabor texture features for retrieval after showing that Gabor features performs better than that using pyramid- structured wavelet transform features, tree- structured wavelet transform features and multi resolution simultaneous autoregressive model.

A total of twenty-four wavelets are generated from the "mother" Gabor function given in Equation using four scales of frequency and six orientations.

Redundancy, which is the consequence of the non-orthogonality of Gabor wavelets, is

addressed by choosing the parameters of the filter bank to be set of frequencies and orientations that cover the entire spatial frequency space so as to capture texture

information as much as possible in accordance

with filter design in [15]. The lower and upper frequencies of the filters are set to 0.04 octaves and 0.5 octaves, respectively, the orientations are at intervals of 30 degrees, and the half-peak magnitudes of the filter responses in the frequency spectrum are constrained to touch each other as shown in Figure 5.1 [15]. Note that because of the symmetric property of the Gabor function, wavelets with center frequencies and orientation covering only half of the frequency spectrum (0,π/6.π/3,π/2,2π/3,5π/6) are generated.

Fig.7 2-D Frequency Spectrum View of

Gabor Filter Bank.

Algorithm 5.1: Texture Feature Extraction.

Purpose: to extract texture features from an image.

Input: RGB colored image.

Output: multi-dimension texture feature vector.

Procedure:

IJSER © 2013 http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 4, Issue 6, June-2013 127

ISSN 2229-5518

{

Step1: Convert the RGB image into gray level. Step2: Construct bank of 24 Gabor filters

using the mother Gabor function with 4 scales and 6 orientations.

Step3: Apply Gabor filters on the gray level of the image by convolution.

Step4: Get the energy distribution of each of the 24 filters responses.

Step5: Compute the mean μ and the standard

deviation Sigma of each energy distribution.

Step6: Return the texture vector T consisting of 48 attributes calculatedfrom step 5.

}

B. Color Feature Extraction

In this system we used global color histograms in extracting the color features of images.

The main issue regarding the use of color histograms for image retrieval involves the choice of color space, color space quantization into a number of color bins, where each bin represents a number of neighboring colors, and a similarity metric.

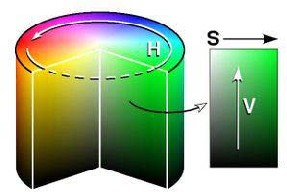

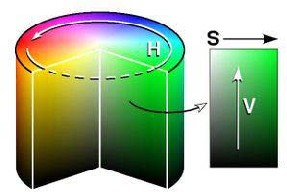

B.1. HSV Color Space

In the literature, there is no optimum color space known for image retrieval, however certain color spaces such as HSV, Lab, and Luv have been found to be well suited for the content based query by color. We adopt to use the HSV (Hue, Saturation, and Value) color space for its simple transform from the RGB (Red, Green, Blue) color space, in which all the existing image formats are represented. The HSV color space is a popular choice for

manipulating color, it is developed to provide an intuitive representation of color and to approximate the way in which humans perceive and manipulate color. RGB to HSV is a nonlinear, but reversible transformation. The hue (H) represents the dominant spectral component (color in its pure form), as in red, blue, or yellow. Adding white to the pure color changes the color: the less white, the more saturated the color is. This corresponds to the saturation (S). The value (V) corresponds to the brightness of color.

The hue (color) is invariant to the illumination and camera direction, and thus suitable for object recognition. Figure 8 shows the cylindrical representation of the HSV color space. The angle around the central vertical axis corresponds to “hue” denoted by the angle from 0 to 360 degrees, the distance from the axis corresponds to “saturation” denoted by the radius, and the distance along the axis corresponds to “lightness”, “value” or “brightness” denoted by the height.

Fig.8. HSV COLOR Space

The HSV values of a pixel can be transformed from its RGB representation according to the following formulas:

H = arctanSqrt(3) ( G-B) /(R-G) +(R-B) S = 1 – ( Min {R,G,B} / v)

IJSER © 2013 http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 4, Issue 6, June-2013 128

ISSN 2229-5518

V = (R +G+ B)/3

VII. Image Retrieval Methodology

The image retrieval methodology proposed in this system goes throughout the steps shown in the block diagram Figure 9 The user enters a query image for which the system extracts both color and texture features as explained in the previous sections, the feature vectors of database images are previously extracted and stored. Using the similarity metrics defined for color and texture, the similarity distances between the query image and every image in the database are calculated according to Equation and then are sorted in ascending order. The first N similar target images (with smallest distance value to the query) are retrieved and shown to the user, where N is the number of the retrieved images required

by the user.

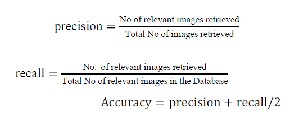

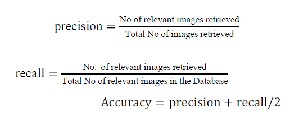

Retrieval Efficiency

The retrieval efficiency, namely recall precision and accuracy were calculated for 20 color images from image database. Standard formulas have been used to compute these parameters.

Fig.9. Block Diagram of Proposed GBIR System.

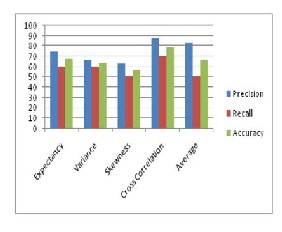

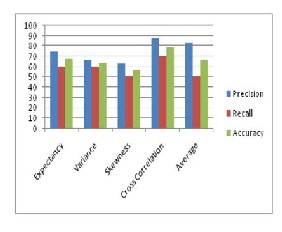

Figure 10: Comparative global descriptor attributes of the proposed CBIR system for various retrieval efficiency measurement

parameters.

IJSER © 2013 http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 4, Issue 6, June-2013 129

ISSN 2229-5518

A comparison result between global descriptor attributes shows that cross correlation functionachieves better retrieval results than all color descriptor attributes for all retrieval efficiency measurement parameters. However recall rate is same for both E and  global descriptor attributes. Precision and recall rate of both E and

global descriptor attributes. Precision and recall rate of both E and  is also better than &one. It has found that cross correlation function also works better than result obtained by taking the average of all image descriptor attributes. Precision rate of average value is greater than that for all color descriptor attributes but recall rate is same as for

is also better than &one. It has found that cross correlation function also works better than result obtained by taking the average of all image descriptor attributes. Precision rate of average value is greater than that for all color descriptor attributes but recall rate is same as for  . From these comparison results, we can see that cross correlation function achieves highest retrieval efficiency.

. From these comparison results, we can see that cross correlation function achieves highest retrieval efficiency.

VIII. Conclusion

Content based image retrieval is a challenging method of capturing relevant images from a large storage space. Although this area has been explored for decades, no technique has achieved the accuracy of human visual perception in distinguishing images. Whatever the size and content of the image database is, a human being can easily recognize images of same category.

From the very beginning of CBIR research, similarity computation between images used either region based or global based features. Global features extracted from an image are useful in presenting textured images that have no certain specific region of interest with respect to the user. Region based features are more effective to describe images that have distinct regions. Retrieval systems based on region features are computationally expensive because of the need of segmentation process in the beginning of a querying process and the

need to consider every image region in similarity computation.

Thus this paper showed that images retrieved by using the global color histogram may not be semantically related even though they share similar color distribution in some results. This drawback is also minimized up to some limit by calculating color feature attributes along with efficient implementation.

IX. Future Scope

The following developments can be made in the future:

1. To obtain better performance, the system can automatically pre-classify the database into different semantic images (such as outdoor vs. indoor, landscape vs. cityscape, texture vs. non texture images) and develop algorithms that are specific to a particular semantic image class.

2. Demonstration of using different color and texture weights in Equation and their effect on the retrieval results.

3. On the approaches proposed in this paper, further work and testing is needed for them to be effective. For future work, Finding good parameters could be another subject to improve histogram-based search algorithm. There are several difficulties associated with the color histogram (CH) as mentioned above.

X. REFERENCES

[1] Shengjiu Wang, “A Robust CBIR Approach Using Local Color Histograms,” Technical Report TR 01-03, Department of computing science, University of Alberta, Canada. October 2001.

[2] R. Schettini, G. Ciocca, S Zuffi. A survey of methods for color image indexing and retrieval in image

IJSER © 2013 http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 4, Issue 6, June-2013 130

ISSN 2229-5518

databases. Color Imaging Science: Exploiting Digital Media, (R. Luo, L. MacDonald eds.), J. Wiley, 2001.

[3] R. Russel, P Sinha. Perceptually based Comparison of Image Similarity Metrics.,MIT AI Memo 2001-014. Massachusetts Institute

of Technology, 2001

[4] J.F. Omhover, M. Detyniecki and B. Bouchon-Meunier, “A Region Similarity Based Image RetrievalSystem”, The 10th International conference on Information Processing and Management of Uncertainty in Knowledge-Based Systems Perugia,Italy

2004.

[5] http://en.wikipedia.org/wiki/RGB, [6]OleAndreasFlaatonJonsgard, Improvements

on color histogram based CBIR,2005. [7]http://en.wikipedia.org/wiki/HSV_color_sp ace,

[8] Ryszard S. Chora´s”Image Feature Extraction Techniques and Their Applications for CBIR andBiometrics Systems” international journal of biology and biomedical engineering,2007.

[9] H. B. Kekre ,Dhirendra Mishra “CBIR

using Upper Six FFT Sectors of Color Images

for FeatureVector Generation” H.B.Kekre. et al /International Journal of Engineering and Technology Vol.2(2), 2010,49-54. [10]Ch.Srinivasarao , S. Srinivaskumar #, B.N.Chatterji “ Content Based Image Retrieval usingContourlet Transform” ICGST- GVIP Journal, Volume 7, Issue 3, November

2007.

[11]Dr. H. B. KekreKavitaSonavane “CBIR Using Kekre’s Transform over Row column

Mean andVariance Vector ” (IJCSE) International Journal on Computer Science and Engineering Vol. 02, No. 05,

2010, 1609-1614.

[12]S. Nandagopalan, Dr. B. S. Adiga, and N. Deepak “A Universal Model for Content- Based ImageRetrieval” World Academy of Science, Engineering and Technology 46

2008.

[13]P. B. Thawari& N. J. Janwe “CBIR Based

On Color And Texture” International Journal ofInformation Technology and Knowledge

Management January-June 2011, Volume 4, No. 1, pp. 129-132.

[14]Jalil Abbas, Salman Qadri, Muhammad Idrees3, SarfrazAwan, NaeemAkhtar Khan1 “Frame WorkFor Content Based Image Retrieval (Textual Based) System” Journal of

American Science 2010;6(9).

[15]Ramesh BabuDurai C “A Generic Approach To Content Based Image Retrieval Using Dct AndClassification Techniques” (IJCSE) International Journal on Computer Science and Engineering Vol. 02,No. 06,

2010, 2022-2024.

[16]Ch.SrinivasaRao ,S.Srinivas Kumar and B.Chandra Mohan “ CBIR Using Exact Legendre MomentsAnd Support Vector

Machine” International Journal Of Multimedia And Its Applications Vol.2,No.2,May2010. [17]HichemBannour_LobnaHlaoua_BechirAy eb, “Survey Of The Adequate Descriptor For ContentBased Image Retrieval On The Web:Global Versus Local Features “ 2009. [18]Hiremath P.S. and JagadeeshPujari “Content Based Image Retrieval using Color Boosted SalientPoints and Shape features of an image” International Journal of Image Processing, Volume (2) : Issue (1).

[19]Zhe-Ming Lu, Su-Zhi Li and Hans Burkhardt , “ A Content-Based Image Retrieval Scheme In JPEGCompressed Domain ” International Journal of Innovative Computing, Information and Control ICIC International °c 2006 ISSN 1349-4198

Volume 2, Number 4, August 2006.

[20]Issam El-Naqa, YongyiYang , Nikolas P. Galatsanos , Robert M. Nishikawa , and Miles

N. Wernick ,“A Similarity Learning Approach to Content-Based Image Retrieval: Application to Digital Mammography” Ieee Transactions On Medical Imaging, Vol. 23, No. 10, October 2004 1233.

[21]Joel Ponianto , “Content-Based Image Retrieval Indexing” School of Computer Science and SoftwareEngineering Monash University, 2005.

[22]Sameer Antani, L. Rodney Long, George

R. Thoma , “Content-Based Image Retrieval for LargeBiomedical Image Archives ”

IJSER © 2013 http://www.ijser.org

International Journal of Scientific & Engineering Research, Volume 4, Issue 6, June-2013 131

ISSN 2229-5518

MEDINFO 2004 M. Fieschi et al. (Eds) Amsterdam: IOS Press © 2004IMIA. [23]Stefan Uhlmann, SerkanKiranyaz and MoncefGabbouj, “A Regionalized Content- Based ImageRetrieval Framework” 15th European Signal Processing Conference (EUSIPCO 2007), Poznan, Poland,

September 3-7, 2007, copyright by EURASIP. [24]Ricardo da S. Torres, Alexandre X. Falco

, “A New Framework to Combine Descriptors for Contentbased Image Retrieval ” IKM’05, October 31.November 5, 2005, Bremen, Germany. Zhong Su, HongjiangZhang, Stan Li, and ShaopingMa , “Relevance Feedback in Content-Based Image Retrieval: Bayesian Framework, Feature Subspaces, and

Progressive Learning” IEEE Transactions On

Image Processing, Vol.

12, No. 8, August 2003.

IJSER © 2013 http://www.ijser.org